Professional Bio

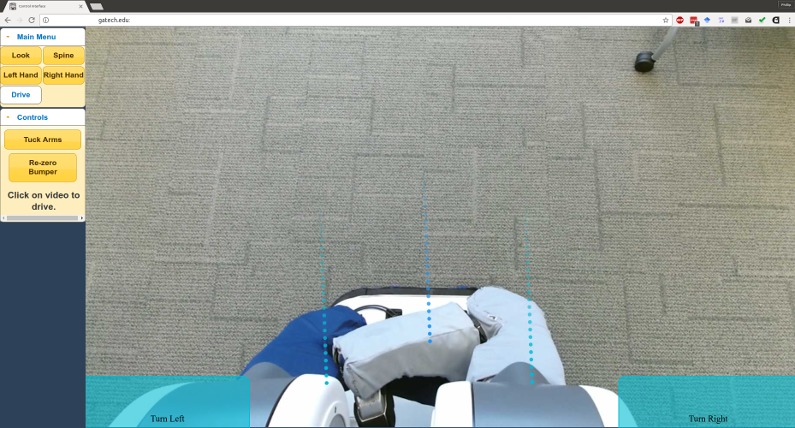

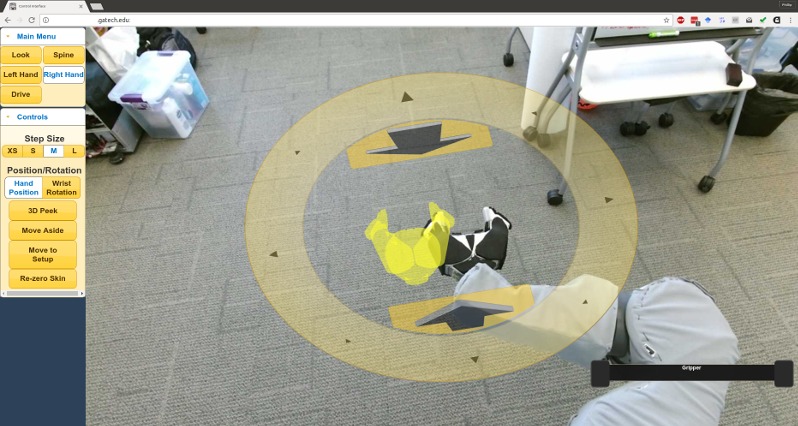

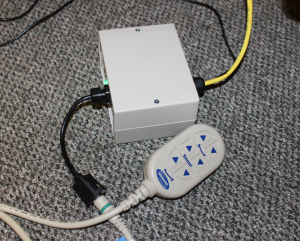

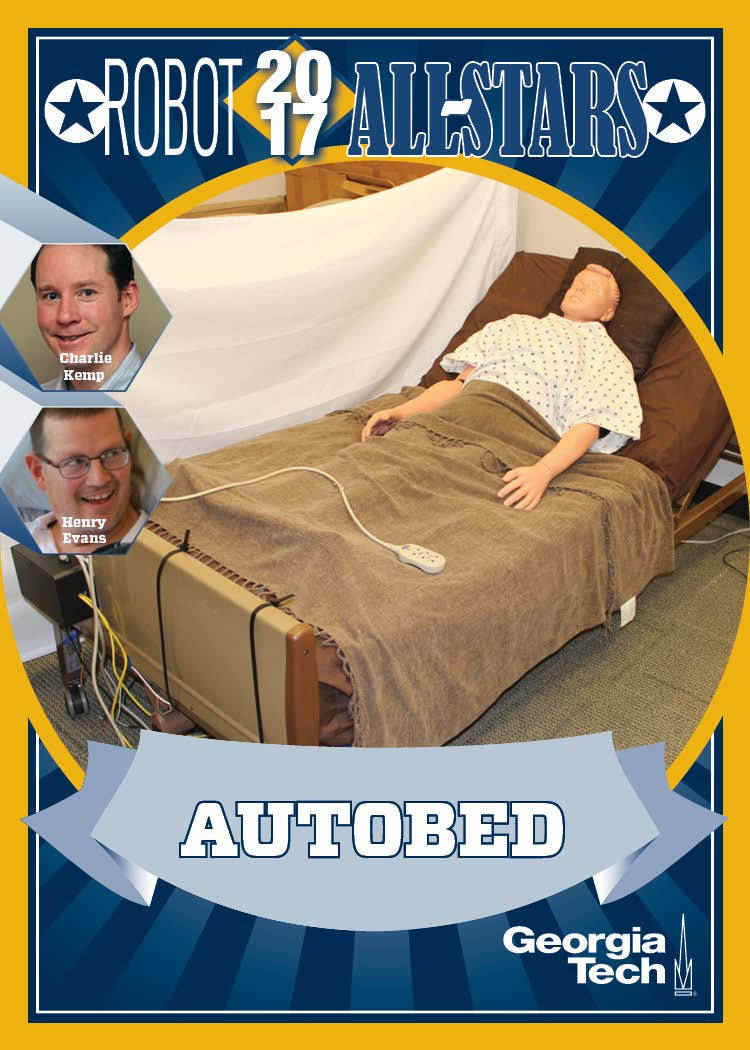

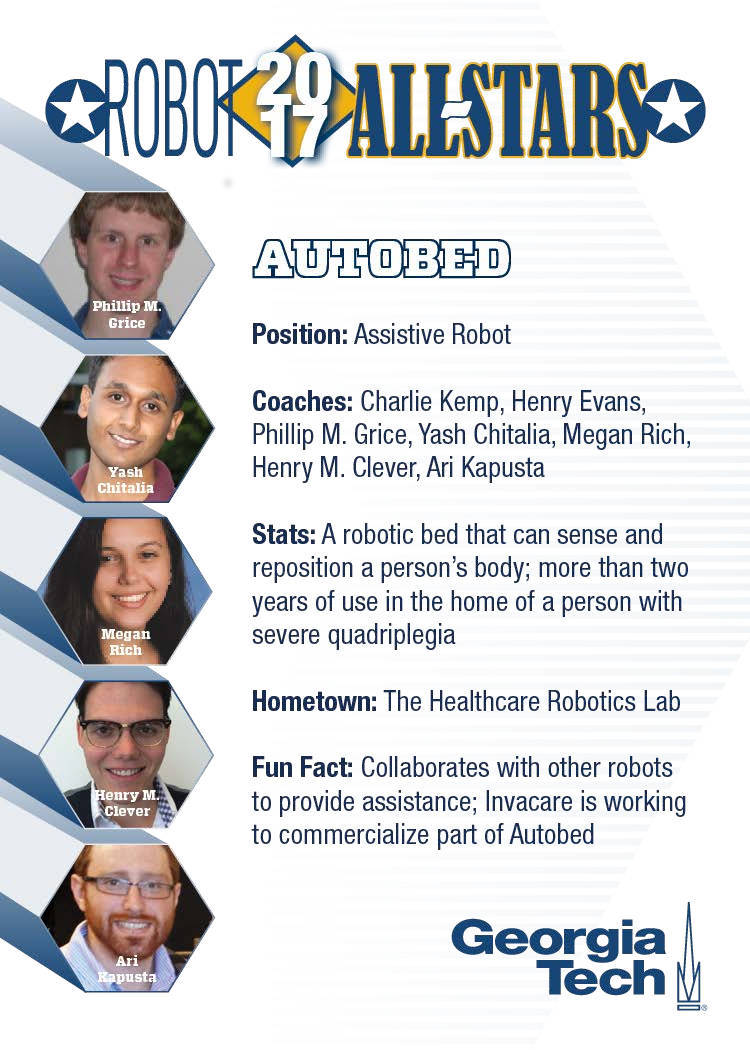

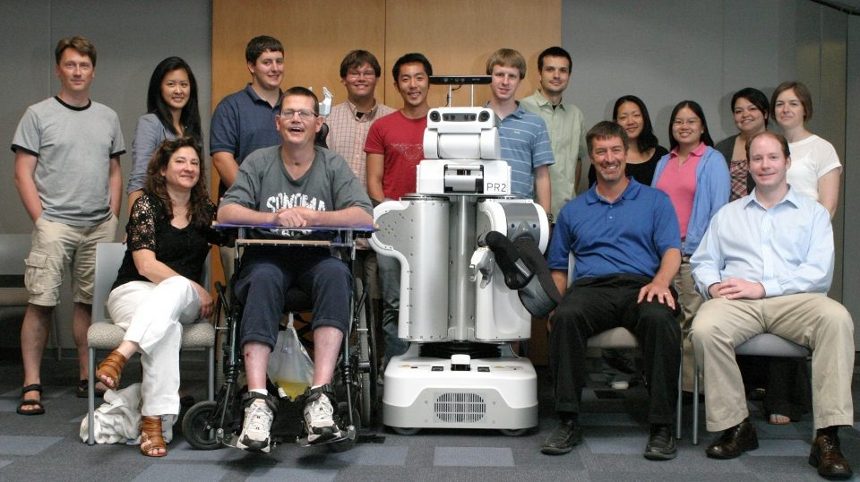

I am a Roboticist currently working as a Principal Robotics Software Engineer at Blue River Technology, developing automation for the future of farming. Previously, I have worked at iRobot and Bossa Nova Robotics. Before joining Bossa Nova, I earned my Ph.D. in Robotics at the Healthcare Robotics Lab at Georgia Tech. My experience includes research-, business-, and consumer-grade robotic systems, indoor and outdoor applications, diverse application environments, and development at every level of the robotics stack, including hardware integration, diagnostics and monitoring, perception pipelines, fleet management, and web interface design. From this broad background, I have developed expertise in robotic systems design and integration, high-quality production robotic software development practices, and human-robot interaction, especially in human support of autonomous systems.

Personal Bio

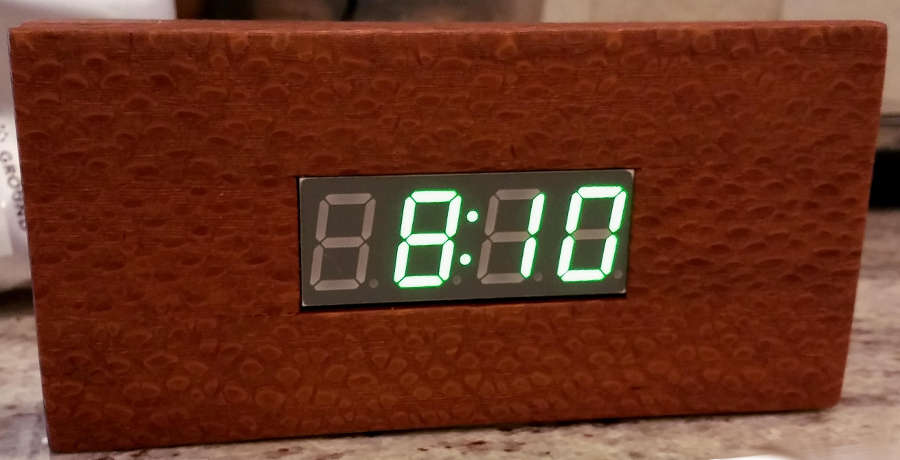

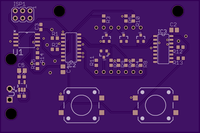

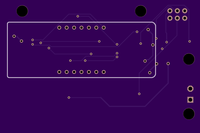

When not advancing the future of robotics, I like to spend my time with my wife, Heather, and our dogs, Seamus and Sadie. On most nights, you will find us in the kitchen trying new recipes or perfecting old ones (with the dogs making sure any dropped food never hits the floor). Heather and I are active in our local church, and enjoy hiking and home improvement projects, including a recent update to our master bathroom. Every fall we follow college football, especially the Big Ten. Being from Ohio, I have always been an Ohio State Buckeyes fan, even though Heather, after attending Luther College in Iowa, now supports the Hawkeyes. I am also usually working on at least one side-project, which might range from woodworking to embedded microcontrollers, or possibly both. See my projects page for more details.